The Basics of Neural Networks

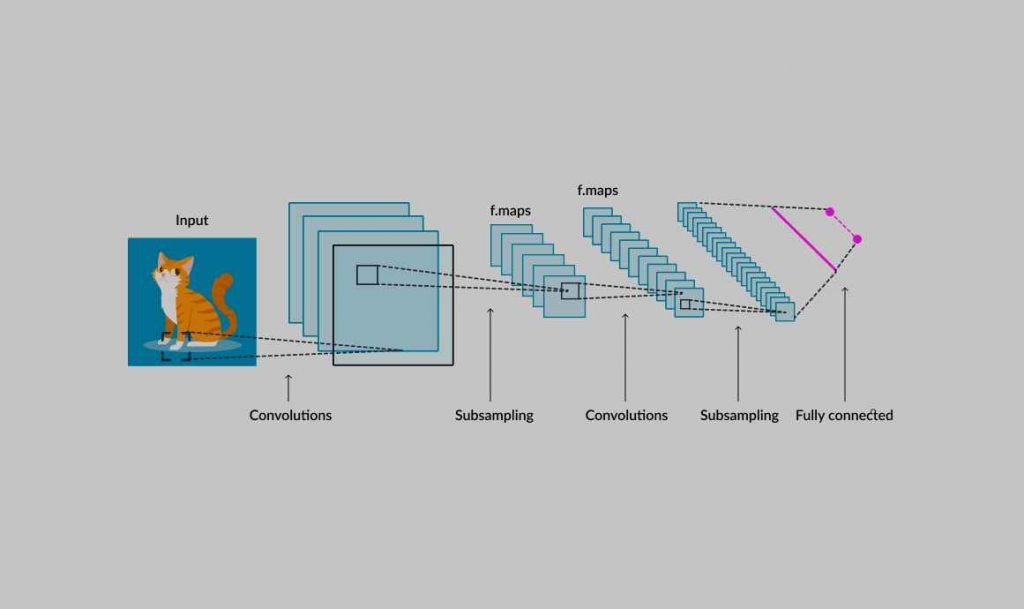

Before we dive into the code, let's understand the fundamentals. Neural networks are computational models inspired by the human brain. They consist of layers of interconnected nodes, or neurons, that work together to perform tasks. Each connection between nodes has a weight, and during training, these weights are adjusted to make the network smarter.

The Perceptron - Our Building Block

The perceptron, a single-layer neural network, is the fundamental building block of neural networks. It takes inputs, applies weights, sums them up, and passes the result through an activation function. The activation function decides whether the neuron should "fire" or not.

class Perceptron:

def __init__(self, num_inputs):

self.weights = [0] * num_inputs

self.bias = 0

def activate(self, inputs):

result = sum(x * w for x, w in zip(inputs, self.weights)) + self.bias

return 1 if result > 0 else 0

Building Our Neural Network

Layering Up - Creating a Multi-Layer Perceptron (MLP)

Now that we've mastered the perceptron, let's move on to the multi-layer perceptron (MLP). An MLP consists of an input layer, hidden layers, and an output layer. The layers are interconnected, and learning happens through a process called backpropagation.

class MLP:

def __init__(self, num_inputs, num_hidden, num_output):

self.hidden_layer = [Perceptron(num_inputs) for _ in range(num_hidden)]

self.output_layer = [Perceptron(num_hidden) for _ in range(num_output)]

def forward(self, inputs):

hidden_outputs = [neuron.activate(inputs) for neuron in self.hidden_layer]

final_output = [neuron.activate(hidden_outputs) for neuron in self.output_layer]

return final_output

Why DIY Neural Networks?

Building a neural network from scratch might seem like reinventing the wheel, especially with powerful frameworks like TensorFlow and PyTorch available. However, understanding the fundamentals is crucial for anyone serious about delving into the world of AI and machine learning. It's like learning to cook from scratch before becoming a master chef.

Common Pitfalls and Errors

Watch Out for Overfitting

Overfitting is a common issue in machine learning. It occurs when a model becomes too specialized in the training data and performs poorly on new, unseen data. Regularization techniques like dropout and L2 regularization can help mitigate this problem.

The Vanishing Gradient Problem

In deep neural networks, the vanishing gradient problem can hinder learning in early layers. Gradients can become infinitesimally small during backpropagation. Activations like ReLU (Rectified Linear Unit) and architectures like Batch Normalization can alleviate this issue.

Modern Frameworks and Influential Minds

Frameworks that Make Life Easier

While building from scratch is a fantastic learning experience, using established frameworks like TensorFlow and PyTorch can significantly speed up the development process. These tools provide high-level abstractions, making it easier to experiment with complex architectures.

Faces in the Neural Network Crowd

The field of neural networks is populated by brilliant minds. Geoffrey Hinton, often referred to as the godfather of deep learning, and Andrew Ng, co-founder of Google Brain, are just a few of the titans shaping the landscape.

Quote of Wisdom

In the words of Geoffrey Hinton, "We can't take all the humans and turn them into AI experts, but we can take all the AI and turn it into a tool that experts use."

Frequently Asked Questions

Is building a neural network from scratch practical?

Absolutely! It provides a solid understanding of the inner workings of neural networks, which is invaluable when working on more complex projects or troubleshooting issues.

Can I use the neural network I built for real-world applications?

While your DIY network may not outperform cutting-edge models, it's a fantastic starting point. Real-world applications often involve more sophisticated architectures and extensive training on vast datasets.

Should I always build from scratch or use frameworks?

Both approaches have their merits. Starting from scratch enhances understanding, while frameworks expedite development. The best approach depends on your learning goals and project requirements.

Embark on this coding adventure, and you'll unlock the secrets of neural networks, one line of Python at a time. Happy coding!